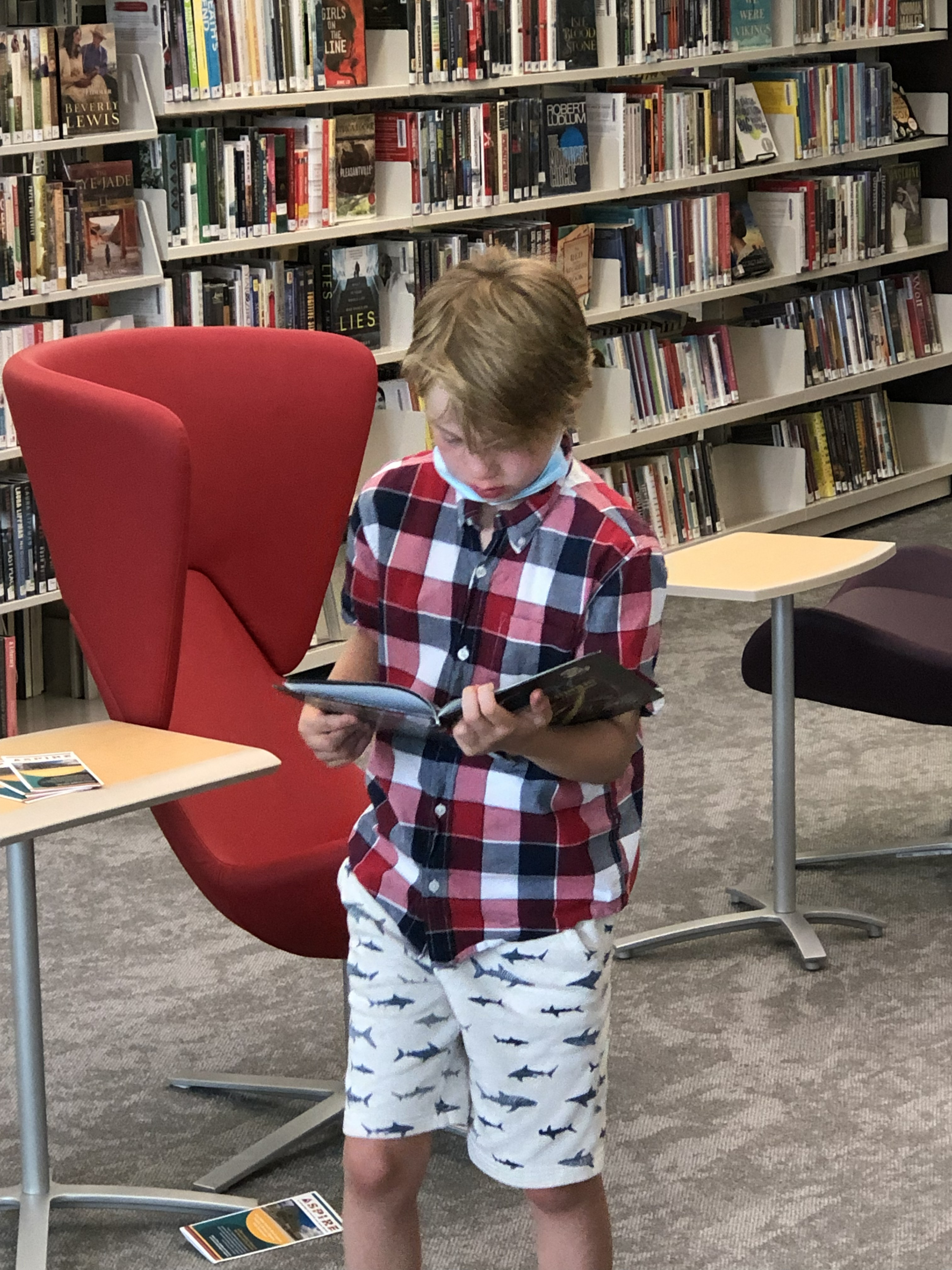

He loved books.

But he couldn’t read them alone.

His teachers did their best.

But they had other students —

and there were only so many hours in the day.

One student needs one human reader. Every single time.

That costs $25/hr. Schools can’t afford it.

Parents can’t always be there.

But we’re in the age of physical AI.

How else might we approach the problem?

The Solution

The Reading Robot

A robot arm that opens books, turns pages, and reads aloud.

How It Works

See → Act → Speak → Repeat

Open the book

Google Gemini assesses the scene. Book open or closed? The robot decides.

Turn the page

Learned motor policies — precise, gentle, repeatable. Retry on failure.

Settle & read aloud

Text streamed to voice. Sub-second latency. Any voice — even grandma’s.

Repeatable task execution with failure handling — no human in the loop.

Why Now

Three capabilities converged

Each existed separately. A $300 robot that sees, reads, and speaks — that’s new.

Why This

The book and the voice must be together.

Audiobooks fail them

85% of books have no audio version. And audiobooks don’t let you hold the real book — kids get lost without the physical page to follow.

Physical disabilities too

Many children can’t turn pages at all. Cerebral palsy, muscular dystrophy, spinal cord injuries. They need a robot hand, not just a robot voice.

A familiar voice

Voice cloning means grandma reads the bedtime story — even when she’s not there. Mom’s voice. A teacher’s voice. Comfort and connection.

children with disabilities.

1 in 7 people.

Every one of them needs a patient, tireless reader.

The Roadmap

From school pilot to consumer companion

.jpg)

Four phases. One mission: build the world's largest paper manipulation dataset while serving 240 million children.

The Economics

Hardware is the wedge. Data is the business.

• 8 strategic partnerships @ $3M/yr

• 20 custom projects @ $300K each

Team

Three engineers. One mission.

Alison Cossette

Vision pipeline, orchestrator, product strategy. AI researcher, Master’s-level AI at Northwestern. Mother of a child with reading disabilities.

Sudhir Dadi

Motor policies, voice pipeline, arm integration. Director of Engineering at Lumen, manages 40 engineers. Deep voice & audio expertise.

Andreea Turcu

Data pipeline, calibration, quality assurance. Head of Global Training at H2O.ai. The motor policies are only as good as the data she curates.

robot readers in schools and libraries around the world.

Every library. Every classroom. Every grandparent’s house.

A patient, tireless reader for every child who needs one.

240 million children are waiting

for someone to read to them.

We built the reader.

...and the dataset.

ladybug.bot

ladybug.bot